Thanks! Visual Studio Code is free while I think PyCharm is subscription based. Do we have a free version of PyCharm.

Yes, free “community” version is here: https://www.jetbrains.com/pycharm/download/#section=mac

Also see this thread for additional info on setup:

Oh yes my bad ! Thanks for the videos.

Looking at the block 107 and 108:

(‘ReLU-107’,

OrderedDict([(‘input_shape’, [-1, 512, 7, 7]),

(‘output_shape’, [-1, 512, 7, 7]),

(‘nb_params’, 0)])),

(‘BasicBlock-108’,

OrderedDict([(‘input_shape’, [-1, 256, 14, 14]),

(‘output_shape’, [-1, 512, 7, 7]),

(‘nb_params’, 0)])),

the input of the output if the block 107 is 51277 and the tinput of the 108 is 2561414.

Is there any operation between the two layers which can explain that ? I was thinking about the residual concatenation but I don’t see how it could explain that do you have any hint ?

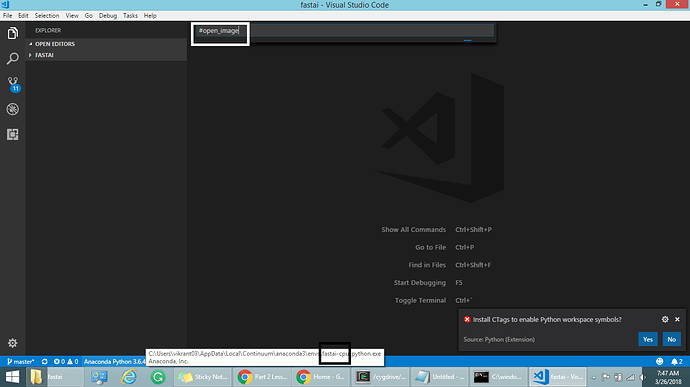

Thank you @jeremy & @asif.imran. I followed the 3 commands and could install fast-ai cpu & on my machine. Then, I selected the Interpreter in the VS Code.

Eventually, I searched for #open_image but it doesn’t show any result. Any thoughts?

iThat’s kind-of true. Although what’s “top” and “bottom” depends on your coordinate plane layout (as you noticed!) It’s very hard to write about all this in a way that’s clear and perfectly accurate! In this case the docs, when they say “bottom”, mean “lower numbered”.

Generally when we label coordinates of a cell in a matrix, or a pixel in an image, we order the y axis top to bottom. But in charting, we label it bottom to top. I’m not sure there’s a way to work around this confusion!

The important question is - have I managed to confuse myself and screw up the definition of any of the models as a result? Frankly. I’m never 100% sure… I think it’s all OK, and it seems to be working OK, but if you see anything that looks backwards please do feel free to experiment to see if you can get better results by changing things around.

Thank you very much Jeremy for your detailed explanation.

According to your suggestion, I will certainly test the results of the model by changing the corner points and will update in case of performance difference.

Slight clarification, it remembers it for the life of the shell. If you e.g. shut down your machine and start it up again, you’ll need to activate your conda environment again.

We may be discussing different things. I was referring to the selection of env/interpreter in vscode. This is remembered across sessions.

Please don’t feel bad. I am learning this myself

So, I may have been too quick last time. You actually want to look at this:

('Conv2d-100',

OrderedDict([('input_shape', [-1, 256, 14, 14]),

('output_shape', [-1, 512, 7, 7]),

('trainable', False),

('nb_params', 1179648)])),

This corresponds to the start of the following block:

(7): Sequential(

(0): BasicBlock(

(conv1): Conv2d (256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True)

<removed>

So, if you put all the info together:

- input is 256 x 14 x 14

- conv2d volume of 256 x 3 x 3

- number of channels is 512

This gives you output that is 512 x 7 x 7

Best,

A

…because the stride is 2x2

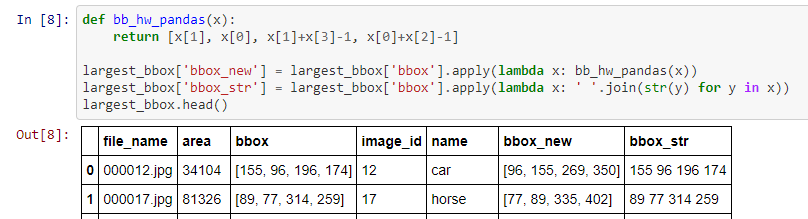

While I found it slightly difficult to wrap my head around the collections.defaultdict and a ton of dictionaries to create the final bounding box dataset (for largest item classifier), I’ve put up a gist on how to get this done in real quick time using pandas and a couple of apply commands.

Jeremy suggested to improve the apply part (cos it’s serial) and I’ll do it soon.

Ganesh from the SF weekend study group also shared this idea. Thank you.

Edit: Updated the link of the gist with the minor changes to the source code.

Yeah I like this a lot better than how I did it.

I am a mac user too and vouch for PyCharm

Hmm thanks I think I understand well this part

But do you have any idea why the output of the block 107:

(‘ReLU-107’,

OrderedDict([(‘input_shape’, [-1, 512, 7, 7]),

(‘output_shape’, [-1, 512, 7, 7]),

does not match the input of the block 108:

(‘BasicBlock-108’,

OrderedDict([(‘input_shape’, [-1, 256, 14, 14]),

(‘output_shape’, [-1, 512, 7, 7]),

How can this “work” without any in-between operation ?

Install CTAGs…

- Download from http://ctags.sourceforge.net/

- Unzip in any folder

- update this in PATH environment variable.

Should we change from [‘bbox’] to [‘bbox_new’] as below? Otherwise, the bbox_str won’t capture the new orientations.

largest_bbox[‘bbox_str’] = largest_bbox[‘bbox_new’].apply(lambda x: ’ '.join(str(y) for y in x))

Oh yes. You’re right. I’ll update the gist in a while.

Thank you.

Edit: Done.

argument to the open_image function has to be casted to string. So use like this open_image(str(IMG_PATH/trn_fns[i]))

Cast argument to string, use like this-

open_image(str(IMG_PATH/trn_fns[i]))

i have an error in F.softmax(predict_batch(learn.model,x),-1)

x,y=next(iter(md.val_dl))

probs=F.softmax(predict_batch(learn.model,x),-1)

x,preds=to_np(x),to_np(probs)

preds=np.argmax(preds,-1)

NameError: name ‘predict_batch’ is not defined

I have searched for predict_batch in whole notebook but it wasn’t there.Maybe it is used in past lectures.Please help me.

Thanks in advance.