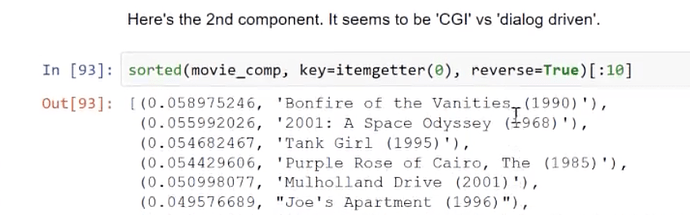

Thanks!

isn’t the embedding matrices size match properly or broadcasting is used?

EmbeddingDotBias (

(u): Embedding(671, 50)

(i): Embedding(9066, 50)

(ub): Embedding(671, 1)

(ib): Embedding(9066, 1)

)

I guess fastai still doesn’t support CPU?

Do you mean that the number of users and the number of items (movies) are different, or that they have a different number of factors between the user/item embeddings and the bias embeddings?

The model is running for a single user/movie pair, and uses dense layers to map between the embedding and bias values into a single prediction.

It checks out.

I Couldn’t find the ‘dislike’ button on the forums for this comment

Why the transpose of embedding matrix is used for computing PCA?

I assumed that the likes were ironic  (including mine)

(including mine)

Take a careful look the formula.

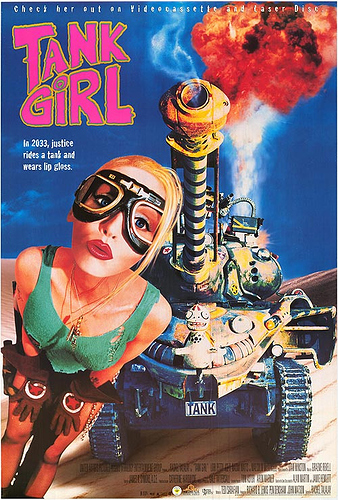

“Tank girl” is dialog driven?

https://www.movieposter.com/posters/archive/main/95/MPW-47507Could it be how surreal or satiric the movies are? But then where is Momento?

Where is that formula located?

Correct Answer: https://github.com/fastai/fastai/blob/master/fastai/column_data.py (Line 184)

forward function inside the class.

From the fellows who had done this course previously(this helps a lot)

I’ll make a forum post. I’m still a bit confused.

no, look at the collaborative filtering model. Here

class EmbeddingDotBias(nn.Module):

def __init__(self, n_factors, n_users, n_items, min_score, max_score):

super().__init__()

self.min_score,self.max_score = min_score,max_score

(self.u, self.i, self.ub, self.ib) = [get_emb(*o) for o in [

(n_users, n_factors), (n_items, n_factors), (n_users,1), (n_items,1)

]]

def forward(self, users, items):

um = self.u(users)* self.i(items)

res = um.sum(1) + self.ub(users).squeeze() + self.ib(items).squeeze()

return F.sigmoid(res) * (self.max_score-self.min_score) + self.min_score

what would be the difference between shallow embedding and deep learning embedding ?

- shallow : get through s dot product matrix multiplication

- deep learning: initiate several layers on the top of a one-hot encoding or any other classic categorical encoding

is it something like this?

Is Shallow learning on large datasets in NLP is faster to get Embeddings than doing a NeuralNet first on?

What was the last question? Didn’t hear it

They were asking about applying some techniques from CV to NLP.