Has anybody any idea about which paper ( any link or title) @jeremy talks at 15 minute of the video. The one that supposedly shows convolution networks supremacy over recurrent ones?

Thanks, that was fast !

Hey all,

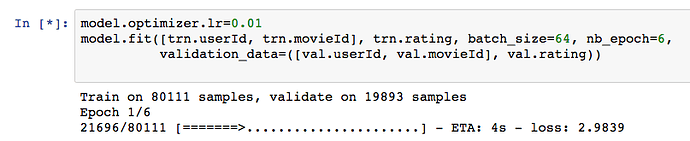

I’m having some trouble with Jupyter notebook while fitting the movielens models. For some reason, whenever I go to fit, I’ll start getting updates, and then the screen hangs here:

It’ll sit like that for 4-5 minutes, all the while nvidia-smi says nothing is going on and top on my P2 instance says nominal CPU usage. However, locally Chrome has a CPU pegged.

After ~100 seconds, I get this error from Jupyter notebooks log:

[W 18:31:45.763 NotebookApp] WebSocket ping timeout after 94698 ms.

I’m wondering if Jupyter notebook is trying to update that progress bar via websocket pings so frequently that it kills my poor little Macbook air. Does anyone know how to make Jupyter rate limit how quickly it updates the browser client?

I know this is off-topic, but figured others may run into the same problem

Thanks!

set verbose parameter in model.fit to 0 or 2

I have a question about collaborative filtering, based on the MovieLens dataset.

Let’s say there is only one movie in the world, and 101 users.

For the first 100 users, we know about their age, name, their favorite color, hometown, etc.

All of them watched the movie, and we know if they liked the movie or not.

We want to know if the 101th user will like the movie.

In this case, collaborative filtering doesn’t work and we must resort to the meta data approach, right?

I’m having some trouble reproducing @jeremy’s results on this notebook. I’ve tried running both Theano and Tensorflow and the best I can do on the Matrix Factorization of the bias is around 1.02, which is significantly higher than the reported 0.79.

Is anyone able to get numbers in the 0.79 ballpark for matrix factorization with Bias?

I’m able to get closer on the Neural net example; around 0.78 consistently, although getting there required relaxing the regularization significantly (1e6). In one of my runs I lucked out and really found a good minima (0.7019) but i’m not able to reproduce those results consistently.

I’ve been playing around with collaborative filtering on this old kaggle competition. Using both the linear model and the neural net model from this lecture I have been able to score around 0.270 which isn’t as good as the sample submission (~0.257) and a ways off from the leader (~0.246).

This competition is neat because Steffen Rendle unleashed LibFM, won the competition by a wide margin, and it makes for a nice benchmark!

I’ve been trying beat the top score with deep learning without success (yet). Would it make sense to add the bias terms to the Input layer of the neural net (like in the linear model)? Has anyone else looked at this data set?

Hi all!

I started to play around with neural net + embeddings. The network follows the architecture proposed in the lecture. However, at test time, I don’t try to see if predict(user,movie) is close to rank[user, movie] but try to apply a real life scenario. That is:

given an user u:

for m in all_movies:

predict(u,m)

argsort(predictions) in reverse order

return top 5

The problem is that almost always I get some highly reccomended movies! Here is an example with some user IDs:

[1945, 2381, 4040, 4199, 241]

[1945, 4199, 2381, 4040, 241]

[1945, 2381, 4199, 241, 1807]

[1945, 2381, 4199, 4040, 241]

[1945, 4040, 2381, 4199, 241]

[1945, 2381, 4040, 4199, 1807]

I noted in the video that we use bias for dot product, just to remove those highly rated movies. However, it seems that the regular neural net can’t handle well these highly liked movies. Any hints on what should I change?

Thank you,

Cristi

Great challenge! To get a reasonable result, you’ll need to use the “side information”. Here’s a nice paper showing how different bits of data and different ensembling approaches contributed to the 5th-placed team: http://cseweb.ucsd.edu/~ranil/Report.pdf . Using a similar approach but with a neural net may give a boost over their results.

I was reviewing the notebook for this class and started wondering about the Dot product model, which was brilliantly explained in Excel as well as through a Keras implementation. In particular, I was wondering whether or not there is any difference between a dot product with user and movie embeddings with, say, 5 latent factors, and a singular value decomposition with 5 singular values/vectors.

As It looks like in both cases the only things that are computed are dot products, I would imagine they must yield the same results (where perhaps the dot product model has the singular values already factored in the latent factors)? Yet, I made a quick prototype to compare the two and could not get the same results.

- Is that an issue due to the solver strategy (tperhaps because of the different ways the eigenvalue/eigenvector problem is solved in SVD vs the Adam with regularization in Keras)?

- Is there something fundamental that I am missing?

Thanks in advance for your input!

Notebook Reproducibility.

Hi everyone,

More on the Lesson 4 recommender systems. I have been unable to reproduce the results that Jeremy obtained with the Dot Product model, Bias model and Neural Net. After having failed to do so in my own implementation, I tried to run the lesson4 notebook exactly as it is (same input, random seeds, etc.) and still could not get the same results (I obtain 2.4 vs 1.4 and 1.14 vs 0.79, too far for being only due to initialization or other random effects, I believe). Has anyone experienced the same issue?

The only two things I can imagine playing a role are:

- I am using a newer version of Keras, which is throwing a warning about the merge layer (but compiles and runs fine).

- Jeremy performed some data preprocessing that does not appear in the notebook.

Any thoughts? I am still also curious to know about my question above, if anyone is able and willing to shed any light on it.

Thanks!

Very similar, except that the version we see in class ignores missing values, whereas SVD generally will consider them equal to zero. Which results in very different models!

Here’s a classic paper on the general approach we’re using: https://papers.nips.cc/paper/3208-probabilistic-matrix-factorization.pdf

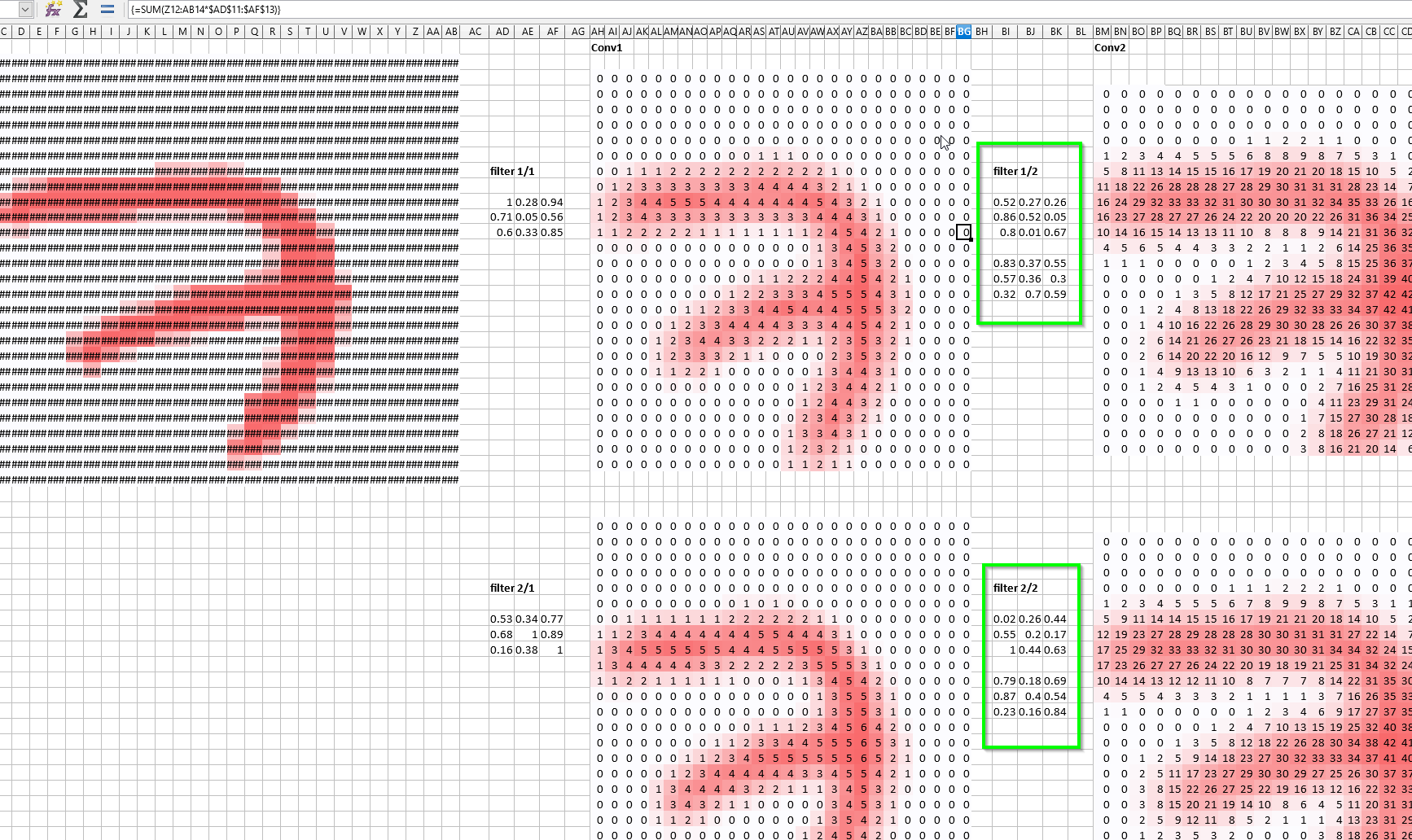

A question regarding the spreadsheet, which is around 8min in the video, how/why did we go from a 3x3 filter to a 3x3x2 tensor filter in the second convolution layer? The image still has the same resolution as the first convolution layer. Also, why don’t we need this in the first player and only use a 3x3 filter there?

Each filter in the second convolutional layer needs to be applied to every output (channel) of the previous layer. If you did so without a tensor, you would be forced to use the same weights for every channel, which would translate into a rather inflexible architecture. You should be able to see where each filter acts on the spreadsheet.

It is not really a matter of resolution, ultimately, as each convolutional layer does not change the resolution of your input, but creates as many “filtered” copies of it as you have filters. I believe the wiki for this lesson notes explains it more eloquently than I do here, though.

Thanks a lot for the reply and for the paper!

I have been playing with all these models to get an idea of their respective performance and I have been stumbling on a few unexpected obstacles. I now believe there is an issue in the loss function evaluation in Keras 2, so if any of you is experiencing the same problem or wants to check independently, please do so.

Specifically:

Running the Bias model with Jeremy implementation, I see a validation loss (MSE) of 1.0278 in the callbacks. If I call model.evaluate, I also see the same exact number. However, if I manually compute the MSE and the RMSE from model.predict compared to the ground truth, I see, respectively, 0.79 and 0.89, exactly in line with Jeremy’s original notebook. I have gone crazy over this over the last few days and perhaps there is something that I am missing, so I am wary of raising an issue with keras and ending up looking like a fool because I have missed something trivial.

On a side note, this is the usage of merge layers in keras 2, for anyone interested:

Keras 1:

x = merge([u, m], mode=‘dot’)

x = merge([x, ub], mode=‘sum’)

Keras 2:

from keras.layers.merge import dot, add

x = dot([u, m], axes=(2,2))

x = add([x, ub])

I have gone crazy over this over the last few days and perhaps there is something that I am missing, so I am wary of raising an issue with keras and ending up looking like a fool because I have missed something trivial.

I’d raise it anyway - just be sure to clearly show your replication steps. I’m sure it’ll be appreciated.

Thanks for your input, Jeremy. I did raise the issue with keras, hopefully it will be of help.

I have also written a blog post comparing some simple implementation of recommender engines here. It is nothing special - the techniques are all basic and a few implementations are taken almost entirely from the MOOC - but I was curious to see how different recommenders compare to each other. The MOOC is credited and linked, of course (I imagine it is ok to do so - and I do not expect many people to read my blog anyway  - but do let me know if anything is not cool, one can never be too careful). I was also thinking to write a few additional blog posts about some of the other material from the MOOC, mostly to motivate myself to really learn the material.

- but do let me know if anything is not cool, one can never be too careful). I was also thinking to write a few additional blog posts about some of the other material from the MOOC, mostly to motivate myself to really learn the material.

Thanks for all the effort, material and assistance. You guys are truly amazing.

Thanks for sharing your post - congrats on putting it together!

My main question is: have you checked that you are excluding the missing matrix entries when you calculated MSE? If not, then you’re giving incorrect results, since you’re mainly looking for models that predict zero for the missing results, rather than actually finding good ratings. It’s easier to do this if you don’t use a matrix structure, but instead leave the list of ratings as a list of movie/user/rating tuples. (You can still create a matrix for your SVD step, of course, but by not using it elsewhere you keep things simpler and more accurate).

BTW, I found the code snippets very hard to read, due to the grey-on-grey color scheme.

Jeremy, to answer your question, yes, I made sure that I excluded the zeros from RMSE calculations, as well as from the recommender systems implementations so the procedure should be conceptually correct (well, in SVD I did not really exclude the zeros, I decomposed a matrix of delta ratings from the mean and kept the zeros, which should be equivalent to a mean value imputation of missing ratings).

Thanks a lot for the feedback on the color-scheme, I think I will go ahead and find a better one.

In general, I really do not know how you find the time to answer all these questions on the forum. I was really not expecting that you yourself intervened in this conversation and I admit that I almost did not reply to your last post lest to take more of your time.