Thanks Jeremy, I just discovered that the problem was that I did not set shuffle=False in the batches. I found this by reading one of your replies on the State Farm forum  Thanks!

Thanks!

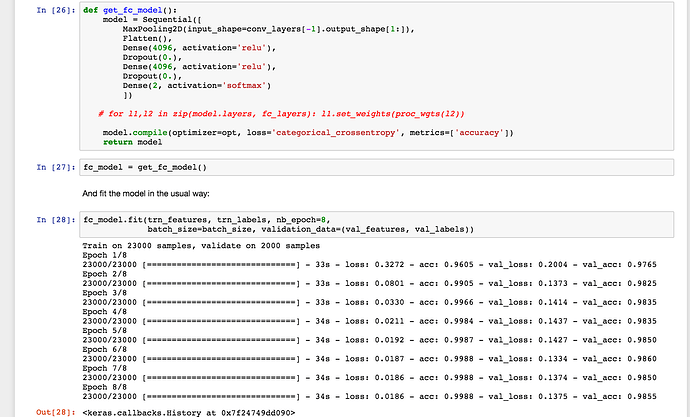

I am wondering what what the purpose is of loading in the weights of the previous model trained from lesson 2 was. I commented out the portion that loaded in the weights of the previous model, and ran the training and the model seems to perform equivalently (see screenshot below)… this seems perferrable to the hassle of loading in the previous weights and rescaling them due to the abscence of dropout.

Is there something I’m missing? Is there another reason that we would want to reload the weights for the dense layers as opposed to re-training? Or was this done in lecture for illustrative purposes to show how to do this? I intuitively understand the advantage of initializing your weights from a previously known result, but I guess it didn’t add much here?

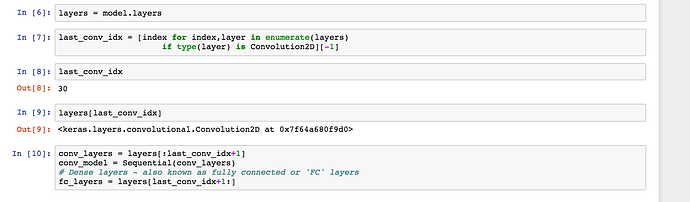

I have an additional question regarding saving the pre-calculated input data in Lesson 3. Using the below code (see screenshot) we grab the layers of the model up until the last convolutional layer. However, this doesn’t make sense to me. Why don’t we grab everything up until and including the flatten layer, so that our pre-calculated input data is already flattened with Maxpooling already calculated? The maxPooling layer and the Flatten() layer are not getting “trained” in the fully connected model so why do we keep these layers?

I will say that saving the input data with these two layers does take considerably longer than without.

EDIT: I watched the video for Lesson 4: and Jeremy answers this question around 1:17:00

When I change the code such that the conv_model includes the flatten layer (noticed how I commented out things in get_fc_model(), things work exactly the same, so I’m wondering if there is a reason Jeremy retained the first two layers?

def get_fc_model():

model = Sequential([

# MaxPooling2D(input_shape=conv_layers[-1].output_shape[1:]),

# Flatten(),

Dense(4096, activation='relu', input_shape=conv_layers[-1].output_shape[1:]),

Dropout(0.),

Dense(4096, activation='relu'),

Dropout(0.),

Dense(2, activation='softmax')

])

#for l1,l2 in zip(model.layers, fc_layers): l1.set_weights(proc_wgts(l2))

model.compile(optimizer=opt, loss='categorical_crossentropy', metrics=['accuracy'])

return modelHi,

I was thinking about statefarm problem and had some thoughts and questions around it.

a. Statefarm problem seems to have lesser variety in the type of objects that are present in images i.e. each image would have a human and some objects within a car. Given VGG16 has been architectured as well as trained to detect a much wider set of objects, it feels like an overkill to use all convolution layers of VGG16 as is for statefarm problem. Any comments/insights regarding this ?

b. Statefarm image categorization would be determined majorly by the relative position of the objects w.r.t each other i.e. ‘hands-on-steering-wheel’ implies undistracted driver VS ‘hands-on-something-else’ implies distracted driver. How can the fact that ‘actual objects matter less Vs relative position of objects matter more’ be factored in to architect the model ? Any insights/guidelines on this ?

c. Unrelated to statefarm, what are relative tradeoffs of using a larger convolution kernel(55 instead of 33 for e.g.) ?

Thanks,

Ajith

Is there any reason, other than ease of production, that you would necessarily make all models in an ensemble of the same architecture? e.g. maybe you make 10 models total, 2 each of 5 slightly different architectures (say varying # of FC layers post conv blocks)

Any help here?

The model is ready and I am loading test batches and predicting them:

test_batches = get_batches(path+'test', batch_size=64, shuffle=False)

Found 12500 images belonging to 1 classes.

imgs, labels = next(test_batches)

preds = final_model.predict(imgs, False)

---------------------------------------------------------------------------

ZeroDivisionError Traceback (most recent call last)

<ipython-input-40-1668c7ba3d55> in <module>()

20

21 # run images through prediction method

---> 22 preds = final_model.predict(imgs, False)

23

24 for idx, _ in enumerate(preds[1]):

/home/ubuntu/anaconda2/lib/python2.7/site-packages/keras/models.pyc in predict(self, x, batch_size, verbose)

721 if self.model is None:

722 self.build()

--> 723 return self.model.predict(x, batch_size=batch_size, verbose=verbose)

724

725 def predict_on_batch(self, x):

/home/ubuntu/anaconda2/lib/python2.7/site-packages/keras/engine/training.pyc in predict(self, x, batch_size, verbose)

1218 f = self.predict_function

1219 return self._predict_loop(f, ins,

-> 1220 batch_size=batch_size, verbose=verbose)

1221

1222 def train_on_batch(self, x, y,

/home/ubuntu/anaconda2/lib/python2.7/site-packages/keras/engine/training.pyc in _predict_loop(self, f, ins, batch_size, verbose)

886 if verbose == 1:

887 progbar = Progbar(target=nb_sample)

--> 888 batches = make_batches(nb_sample, batch_size)

889 index_array = np.arange(nb_sample)

890 for batch_index, (batch_start, batch_end) in enumerate(batches):

/home/ubuntu/anaconda2/lib/python2.7/site-packages/keras/engine/training.pyc in make_batches(size, batch_size)

262 '''Returns a list of batch indices (tuples of indices).

263 '''

--> 264 nb_batch = int(np.ceil(size / float(batch_size)))

265 return [(i * batch_size, min(size, (i + 1) * batch_size))

266 for i in range(0, nb_batch)]

ZeroDivisionError: float division by zero

The batch_size is 64, and I have ensured it using test_batches.batch_size. I also printed out imgs and labels and there is data. Is there another batch_size I am not seeing?

So you are trying to predict on a single batch as a test?

Note that final_model is not Jeremy’s wrapper, but directly keras.models.Sequential and parameters that you pass to predict function do not match Sequential.predict, see documentation https://keras.io/models/sequential/#predict

If you want do prediction on a single batch like in your code , change your solution to

test_batches = get_batches(path+'test', batch_size=64, shuffle=False)

imgs, labels = next(test_batches)

preds = final_model.predict(imgs, batch_size=64)

If you want to instead run it for all test batches

test_batches = get_batches(path+'test', batch_size=64, shuffle=False)

preds = final_model.predict_generator(test_batches, 64)

Thanks… Works perfectly.

Can I know the amount of dropout in a dropout layer?

Questions on mnist notebook:

-

For the “VGG-style” CNN, how did you choose the number of filters in the two conv. layers? Is there some general methodology or is it black magic?

-

Similar question on the Dense layers, how do you determine the number of outputs? For example, VGG has two Dense layers with 4096 outputs, the mnist example here has one with 512 … but I don’t understand the rhyme or reason behind the choices.

-

How come you didn’t use zero-padding layers to ensure the 3x3 filters passed evenly over the entire 28x28 images?

When I pop a layer and than add another, the weights are lost! What is wrong?

vgg = Vgg16()

vgg.model.layers.pop()

vgg.model.layers.append(Dense(2, activation='softmax'))

vgg.model.layers[-1].get_weights()

[]

If I try to load the saved weights, nothing changes:

vgg.model.load_weights(RESULTS_HOME_DIR+'/ft1_office.h5')

vgg.model.layers[-1].get_weights()

The output is again []. I tried to do vgg.compile() but nothing changes again.

@krasin you have to train the last layer while setting the rest of the layers to train=false, look inside vgg16.py finetune function. You don’t have any weights for a Dense(2) layer you can load.

I expected that the weights will be initialised with random values. Now I noticed that

vgg.model.summary()

still has output shape (None, 1000) instead (None, 2)

And vgg.model.evaluate_generator(batches, batch_size) gives error:

ValueError: Error when checking model target: expected dense_7 to have shape (None, 1000) but got array with shape (14, 2)@krasin have you compiled the model after changing your last layer?

how does your question relate to how weights are initialized?

Yes @Gelu74, I tried. Part of my exercise to reproduce the model without using ft(2) or finetune is here as [github gist] (https://gist.github.com/krasing/6833180987e388473e05fa052a14e117). Error after error

I don’t know whether the errors are because there are no weights, this was just a guess.

@krasing

where do you get ‘ft1_office.h5’ file from?

instead of

vgg.model.layers.pop()

i’d do

vgg.model.pop()

instead of

vgg.model.layers.append(Dense(2, activation=‘softmax’, input_shape=input_shape))

I’d do

vgg.model.add(Dense(num_categories, activation=‘softmax’))

@Gelu74 Great, thanks  So I was wrongly popping and adding layers. Now everything works. The ‘ft1_office.h5’ file is just the one with the saved model weights.

So I was wrongly popping and adding layers. Now everything works. The ‘ft1_office.h5’ file is just the one with the saved model weights.

Is it better to have a BatchNormalization() layer before or after a Dropout() layer? In the lesson BN is after D while in vgg16bn.py BN is before D.

I think I figured out how to make the missing weights file from bn_model.load_weights('/data/jhoward/ILSVRC2012_img/bn_do3_1.h5'). Here is the code:

bn_model = Sequential(get_bn_layers(p))

def proc_wgts(layer, prev_p, new_p):

scal = (1-prev_p)/(1-new_p)

return [o*scal for o in layer.get_weights()]

fitted_fc_layers = fc_model.layers[:4]+[BatchNormalization()]+fc_model.layers[4:6]+[BatchNormalization()]+fc_model.layers[6:]

for l1,l2 in zip(bn_model.layers, fitted_fc_layers):

if type(l1)==Dense: l1.set_weights(proc_wgts(l2, 0, p))

bn_model.pop()

for layer in bn_model.layers: layer.trainable=False

bn_model.add(Dense(2,activation='softmax'))

bn_model.compile(Adam(), 'categorical_crossentropy', metrics=['accuracy'])

bn_model.fit(trn_features, trn_labels, nb_epoch=1, validation_data=(val_features, val_labels))

bn_model.save_weights('bn_do3_1.h5')

This gives me good results, even after one epoch: 12s - loss: 0.0608 - acc: 0.9814 - val_loss: 0.0637 - val_acc: 0.984 and can be used to complete the lesson.

In the model ensembling for mnist data, we created 6 models as following

models = [fit_model() for i in range(6)]

The purpose: " so here are 6 models, they’ve all been trained the same way but with different starting points. "

my question is: where in the code did we specify different starting points in training?