Reg. point 3, Jeremy said that the paper suggests just copying the classes underrepresented to increase their count. I want to experiment with some sort of data augmentation tricks + copying too.

Because the training loss includes dropout. We’ll learn about this soon.

That paper is only relevant to folks with effectively infinite resources. IMHO it’s of little if any practical value.

We’re only using the learning rate finder from that paper, not the cyclical learning rates themselves. The annealing method we use is from https://arxiv.org/abs/1608.03983

Yes, exactly

Yup that’s exactly what we’ll be doing

You got it right

Yes there’s dropout. We’ll learn about that soon.

We don’t appear to be using any k-fold cross validation so far. Is this not needed because of TTA?

'+ train prediction through cv

Yes that’s a real issue in the unicorn community. In practice, you have to adjust your probabilities to ‘undo’ the over-sampling.

Yup I did say that. Adding the validation set back in right at the end is fine - you can get a better final model this way. cc @yinterian

Yes, that’s (sort of) what we do for top-down.

That’s only necessary if you have such a small dataset that you can’t afford to put aside a validation set.

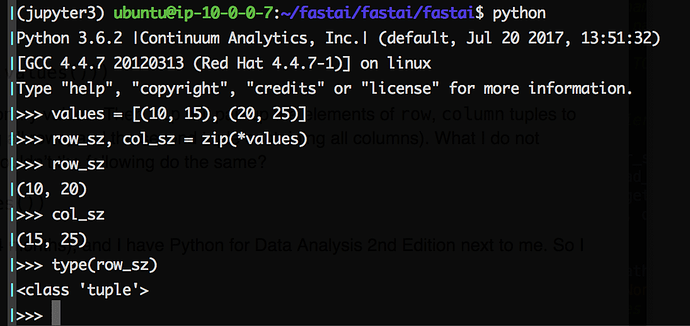

In today’s class, there was a snippet of code:

row_sz, col_sz = list(zip(*size_d.values()))

I understand that * will unpack the list of dictionary values. Then zip will pair up the elements of row, column tuples to create a list of tuples (the first tuple containing all rows, and the second tuple containing all columns). What I do not understand is the role of list in this code. Wouldn’t the following do the same?

row_sz, col_sz = zip(*size_d.values())

p.s. I am somewhat new to Python (it’s been 4 months), and I have Python for Data Analysis 2nd Edition next to me. So I apologize if this is something obvious.

EDIT: what I wrote is completely wrong! Sorry for the noise.

You would be right in Python 2, but in Python 3 zip returns an iterator, not a list. So you need to force its evaluation with list in order to be able to unpack and assign it to row_sz and col_sz.

Hi @jacquerie!

Thank you for the response! I do see the zip returns an iterator. And I guess I’m not fully understanding why this works:

Thanks in advance!

In case you still have issues with the Kaggle command line tool, try refreshing your installed kaggle-cli version because it was updated over the weekend

I’m getting this error in Crestle, do I just need to download that file and unpack the weight files into the right directory? (or do I really need to stop using Crestle?)

learn = ConvLearner.pretrained(arch, data, precompute=True, ps=0.5)

FileNotFoundError Traceback (most recent call last)

…

FileNotFoundError: [Errno 2] No such file or directory: ‘/home/nbuser/courses/fastai2/courses/dl1/fastai/weights/resnext_50_32x4d.pth’