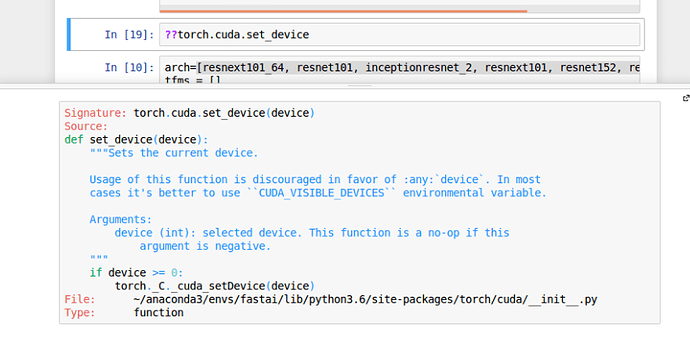

Don’t forget about ?? to see what different functions do as well. A good way to get an idea at least.

Probably if you are running locally try installing cudnn 6.0

Because nowadays laptops have optimus technology because of which they keep switching between GPU and CPU(Inbuilt graphic card)

No that’s not related to this issue. See my earlier reply for the resolution.

creating a new dataset

@jeremy,please correct me if i’m wrong in understanding the steps for building a new dataset suppose for a pen and a pencil image classifier

1.download the images of pen and pencil suppose 100 each.

2.put 70 of them in training set name pen and pencil label each and every pen image as pen_1.jpg,pen_2.jpg

and pencil_1.jpg,pencil_2.jpg.

3.*put 10 of them in the validation set again and label them as suppose pen_71.jpg,pen_72.jpg and pencil_71.jpg,pencil_72.jpg

4.put 20 of them in the test set ,but do not label them ,leave them with the name with which they were downloaded from web.

doubts:

1.do the distribution of mine is correct that is 70% to the training set,10% validation set and 20% text set

2.does the approach of mine, not labeling the test set is correct.

3.while labeling the dataset ,i should label them in a sequence as i did above.since this is a small dataset i can manage but if the dataset consist of 1000 image then how it will be possible

please guide me,in clearing my doubts

- I think you don’t need to rename them. In the ImageClassifierData.from_paths method the images are labeled by their

subfolder names.

train/pen

train/pencil

valid/pen

valid/pencil

test

so,that means i just need to download the images and put in the folder name pen and pencil respectively ,then there will be no need of naming each and every image of pen and pencil,because of which i can go on with the name of those images with which they were downloaded.

is it i got your description correctly

yes. Just put them to the right folder.

cool,thanks for your advice

- If you are not that worried about your actual test error you can use just 70% -30% or 80%-20% split between train and validation. (Since you don’t have that many images)

- You don’t need to re-label images.

- either put the in different folders (folder pen and folder pencil)

or - make a csv file with each image name and the class it corresponds to

image1,pen

image2,pencil

About the distribution:

It depends on how many images you have. The main objective is to have a good estimator of your errors. For example if you had 2 million images, most likely you just need a couple of thousands for validation or testing.

thanks ,for your advice , i’ll put them in different folders ,and my test set will consist of mixed of pencil and pen images is’t i’m correct.

and mam could you please explain me why you mentioned if are not" worried about your actual test error".

@naveenmanwani, I think she is referring to the fact that if you do as she suggests, you are not withholding any data for a test dataset, and therefore you can’t calculate test error.

thank you for your reply .so that means @memetzgz .i should put considerable amount of data to in my test set too

@naveenmanwani, Have you considered using k-fold cross validation? That may help you squeeze the most informational “juice” out of your training/validation set. scikit-learn has a method for that, I believe.

what this line does in below function. Please explain:

return data if sz>300 else data.resize(340,'tmp')

def get_data(sz,bs):

tfms = tfms_from_model(arch, sz, aug_tfms=transforms_side_on,max_zoom=1.1)

data = ImageClassifierData.from_csv(PATH, 'train', f'{PATH}labels.csv', test_name='test', num_workers=4,

val_idxs=val_idxs, suffix='.jpg', tfms=tfms, bs=bs)

return data if sz>300 else data.resize(340,'tmp')Please explain what is the importance of [ps =0.5] in below code

learn = ConvLearner.pretrained(arch, data, precompute=True, ps=0.5)

We haven’t got to that yet - will do later in the course.

ok.thanks

We’ve discussed that a few times already - do a forum search, and if you don’t get the answer you need, please reply on one of the threads that have discussed these previously.

@jeremy If we are converting our images from matrix form to image file like jpeg or png, is there a advantage to choose one over the other in terms of performance? From what I have read png is a lossless compression whereas there is information loss in jpeg format. Does this information loss affect the performance of the model ?

This certainly isn’t a beginner question  But yes lossy compression does matter, so png may be better. You can always try both and see - it depends on the type of image.

But yes lossy compression does matter, so png may be better. You can always try both and see - it depends on the type of image.