Cant’ get 97% accuracy (using Windows and Tensorflow backend)

Does everyone easily get to 97% accuracy on lesson 1 and 2? I get around 90% at most.

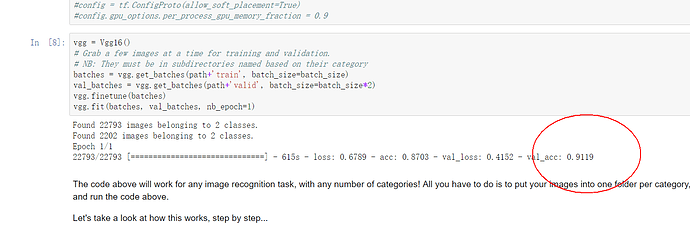

I tried a few learning rates going as low as 3E-5. While loss and precision progress as we normally expect when doing backprop, it always stabilizes around

Loss: 0.2302 - acc: 0.9100 - val_loss: 0.1540 - val_acc: 0.9340

And I went up to 150 Epochs…

I have to admit I am running this on a Windows PC with Python 3.6 and Tensorlfow as the backend. My hunch is that I have my channels wrong or something like that.

I did modify the source code according to quite a few posts about it lying around in the forums.

So I did include stuff like:

K.set_image_dim_ordering('th')

in the code for vgg16.py

and I also have

%matplotlib inline

from keras import backend

backend.set_image_dim_ordering(‘th’)

As my first cell in the notebook.

Included is the graphI see in Tensorboard. The top layers don’t look like what I would expect: The dense_3 node, which I deduct is the layer we pop to fine tune, is dangling up there sending it’s output to no one. dense_4 is outputting a 2 label tensor so that bit looks OK. But maybe that is Keras doing normal extra internal stuff on what I define.

Did I miss anything?

Here is my code below. Any hints on what else I should check?

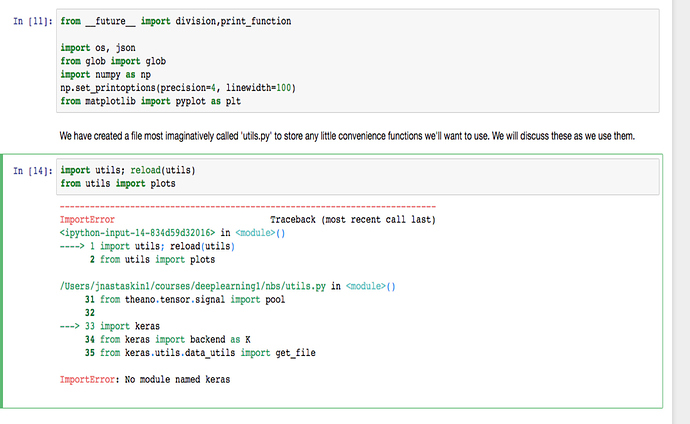

from __future__ import division, print_function

import os, json

from IPython.display import display

from math import ceil

from glob import glob

import numpy as np

from scipy import misc, ndimage

from scipy.ndimage.interpolation import zoom

from keras import backend as K

from keras.layers.normalization import BatchNormalization

from keras.callbacks import TensorBoard

from keras.utils.data_utils import get_file

from keras.models import Sequential

from keras.layers.core import Flatten, Dense, Dropout, Lambda

from keras.layers.convolutional import Conv2D, MaxPooling2D, ZeroPadding2D # Conv2D: Keras2

from keras.layers.pooling import GlobalAveragePooling2D

from keras.optimizers import SGD, RMSprop, Adam

from keras.preprocessing import image

# In case we are going to use the TensorFlow backend we need to explicitly set the Theano image ordering

K.set_image_dim_ordering('th')

vgg_mean = np.array([123.68, 116.779, 103.939], dtype=np.float32).reshape((3,1,1))

def vgg_preprocess(x):

"""

Subtracts the mean RGB value, and transposes RGB to BGR.

The mean RGB was computed on the image set used to train the VGG model.

Args:

x: Image array (height x width x channels)

Returns:

Image array (height x width x transposed_channels)

"""

x = x - vgg_mean

return x[:, ::-1] # reverse axis rgb->bgr

class Vgg16():

"""

The VGG 16 Imagenet model

"""

def __init__(self):

self.FILE_PATH = 'http://files.fast.ai/models/'

self.create()

self.get_classes()

def get_classes(self):

"""

Downloads the Imagenet classes index file and loads it to self.classes.

The file is downloaded only if it not already in the cache.

"""

fname = 'imagenet_class_index.json'

fpath = get_file(fname, self.FILE_PATH+fname, cache_subdir='models')

with open(fpath) as f:

class_dict = json.load(f)

self.classes = [class_dict[str(i)][1] for i in range(len(class_dict))]

def predict(self, imgs, details=False):

"""

Predict the labels of a set of images using the VGG16 model.

Args:

imgs (ndarray) : An array of N images (size: N x width x height x channels).

details : ??

Returns:

preds (np.array) : Highest confidence value of the predictions for each image.

idxs (np.ndarray): Class index of the predictions with the max confidence.

classes (list) : Class labels of the predictions with the max confidence.

"""

# predict probability of each class for each image

all_preds = self.model.predict(imgs)

# for each image get the index of the class with max probability

idxs = np.argmax(all_preds, axis=1)

# get the values of the highest probability for each image

preds = [all_preds[i, idxs[i]] for i in range(len(idxs))]

# get the label of the class with the highest probability for each image

classes = [self.classes[idx] for idx in idxs]

return np.array(preds), idxs, classes

def ConvBlock(self, layers, filters):

"""

Adds a specified number of ZeroPadding and Covolution layers

to the model, and a MaxPooling layer at the very end.

Args:

layers (int): The number of zero padded convolution layers

to be added to the model.

filters (int): The number of convolution filters to be

created for each layer.

"""

model = self.model

for i in range(layers):

model.add(ZeroPadding2D((1, 1)))

#model.add(Convolution2D(filters, (3, 3), activation='relu'))

model.add(Conv2D(filters, kernel_size=(3, 3), activation='relu')) # Keras2

model.add(MaxPooling2D((2, 2), strides=(2, 2)))

def FCBlock(self):

"""

Adds a fully connected layer of 4096 neurons to the model with a

Dropout of 0.5

Args: None

Returns: None

"""

model = self.model

model.add(Dense(4096, activation='relu'))

model.add(Dropout(0.5))

def create(self):

"""

Creates the VGG16 network achitecture and loads the pretrained weights.

Args: None

Returns: None

"""

model = self.model = Sequential()

model.add(Lambda(vgg_preprocess, input_shape=(3,224,224), output_shape=(3,224,224)))

self.ConvBlock(2, 64)

self.ConvBlock(2, 128)

self.ConvBlock(3, 256)

self.ConvBlock(3, 512)

self.ConvBlock(3, 512)

model.add(Flatten())

self.FCBlock()

self.FCBlock()

model.add(Dense(1000, activation='softmax'))

fname = 'vgg16.h5'

model.load_weights(get_file(fname, self.FILE_PATH+fname, cache_subdir='models'))

def get_batches(self, path, gen=image.ImageDataGenerator(), shuffle=True, batch_size=8, class_mode='categorical'):

"""

Takes the path to a directory, and generates batches of augmented/normalized data. Yields batches indefinitely, in an infinite loop.

See Keras documentation: https://keras.io/preprocessing/image/

"""

return gen.flow_from_directory(path, target_size=(224,224),

class_mode=class_mode, shuffle=shuffle, batch_size=batch_size)

def ft(self, num):

"""

Replace the last layer of the model with a Dense (fully connected) layer of num neurons.

Will also lock the weights of all layers except the new layer so that we only learn

weights for the last layer in subsequent training.

Args:

num (int) : Number of neurons in the Dense layer

Returns:

None

"""

model = self.model

model.pop()

for layer in model.layers: layer.trainable=False

model.add(Dense(num, activation='softmax'))

self.compile()

def finetune(self, batches):

"""

Modifies the original VGG16 network architecture and updates self.classes for new training data.

Args:

batches : A keras.preprocessing.image.ImageDataGenerator object.

See definition for get_batches().

"""

self.ft(batches.num_class)

classes = list(iter(batches.class_indices)) # get a list of all the class labels

# batches.class_indices is a dict with the class name as key and an index as value

# eg. {'cats': 0, 'dogs': 1}

# sort the class labels by index according to batches.class_indices and update model.classes

for c in batches.class_indices:

classes[batches.class_indices[c]] = c

self.classes = classes

def compile(self, lr=0.00003):

"""

Configures the model for training.

See Keras documentation: https://keras.io/models/model/

"""

self.model.compile(optimizer=Adam(lr=lr),

loss='categorical_crossentropy', metrics=['accuracy'])

def fit_data(self, trn, labels, val, val_labels, nb_epoch=1, batch_size=64):

"""

Trains the model for a fixed number of epochs (iterations on a dataset).

See Keras documentation: https://keras.io/models/model/

"""

self.model.fit(trn, labels, epochs=nb_epoch,

validation_data=(val, val_labels), batch_size=batch_size)

def fit(self, batches, val_batches, nb_epoch=1):

"""

Fits the model on data yielded batch-by-batch by a Python generator.

See Keras documentation: https://keras.io/models/model/

"""

display("Yes2")

tbCallBack = TensorBoard(log_dir='./Graph', histogram_freq=1, batch_size=batches.batch_size, write_graph=True, write_grads=True,write_images=True)

self.model.fit_generator(batches, steps_per_epoch=ceil(batches.samples/batches.batch_size), epochs=nb_epoch,

validation_data=val_batches, validation_steps=ceil(val_batches.samples/val_batches.batch_size),

callbacks=[tbCallBack])

def test(self, path, batch_size=8):

"""

Predicts the classes using the trained model on data yielded batch-by-batch.

Args:

path (string): Path to the target directory. It should contain one subdirectory

per class.

batch_size (int): The number of images to be considered in each batch.

Returns:

test_batches, numpy array(s) of predictions for the test_batches.

"""

test_batches = self.get_batches(path, shuffle=False, batch_size=batch_size, class_mode=None)

return test_batches, self.model.predict_generator(test_batches,

ceil(test_batches.samples/test_batches.batch_size))