Ok, I’m finding these forums a little confusing in terms of organization, and I hope this is the right place to post, but if it’s not, I’d appreciate it if someone would point me in the right direction.

So, I’ve created a new directory structure from the kaggle images downloaded with kaggle-cli. What I did was: I put them all in a folder called ‘train’, and then divided them into two directories (cats, dogs) according to their filenames. Then I made a cross-validation directory in the same folder where I put ‘train’, and I called it ‘valid’. I put about 20% of the images from ‘train’ into ‘valid’, using the crude method of ‘mv *3.jpg …’, ‘mv *4.jpg …’ to get that division of images. This allowed me to train vgg on those images (in a copy of the original Lesson 1 notebook), and the training went as expected. So far so good!

But I was a bit stumped at the next step. I copied some of the images from the test set into a directory called ‘smalltest’ (‘cp [0-9].jpg …’, copying 10 test images to make a small test), and then I tried to get predictions for those images, but that’s where I faltered. I looked at the example code, and it seems to want to use vgg.predict() on images being fed to it. That seems straightforward, except that I can’t seem to find a way to feed the predict() method images from ‘smalltest’ that doesn’t throw errors!

I even tried:

small_batch = vgg.get_batches(path+'smalltest', batch_size=2) #I used 2 because I keep getting a 'modulo by zero error and I thought lower batch values might fix it, but the error seems independent of this value

for img, label in small_batch:

plots(img, label)

In order to try to have any idea of how to get vgg to take these images in, and of course this fails. It looks like the predict() method should work straightforwardly if I can feed it data in the way it’s expecting, but I am not sure how to do that.

Edit:

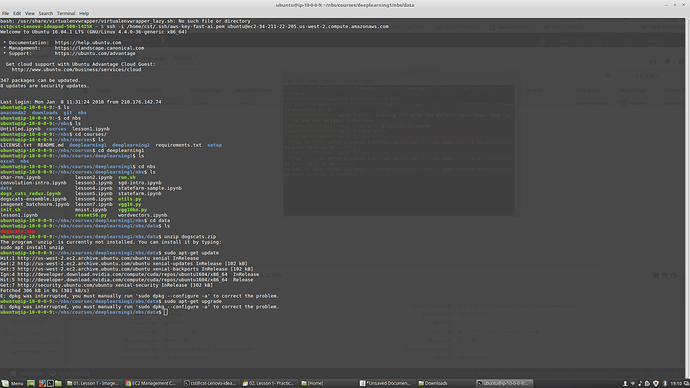

I forgot to mention that I’m on a p2 server. I looked around in this forum again and decided to try the test() method as described above. Like this:

batches, preds = vgg.test(path + 'smalltest', batch_size=batch_size)

out = []

for img_path,pred in zip(batches.filenames, preds):

out.append([img_path,pred])

print(out)

(Printing them out as an initial sanity check)

I again got the same error:

Exception in thread Thread-6:

Traceback (most recent call last):

File "/home/ubuntu/anaconda2/lib/python2.7/threading.py", line 801, in __bootstrap_inner

self.run()

File "/home/ubuntu/anaconda2/lib/python2.7/threading.py", line 754, in run

self.__target(*self.__args, **self.__kwargs)

File "/home/ubuntu/anaconda2/lib/python2.7/site-packages/keras/engine/training.py", line 425, in data_generator_task

generator_output = next(generator)

File "/home/ubuntu/anaconda2/lib/python2.7/site-packages/keras/preprocessing/image.py", line 593, in next

index_array, current_index, current_batch_size = next(self.index_generator)

File "/home/ubuntu/anaconda2/lib/python2.7/site-packages/keras/preprocessing/image.py", line 441, in _flow_index

current_index = (self.batch_index * batch_size) % N

ZeroDivisionError: integer division or modulo by zero

My last hypothesis about this was that it must somehow be because I have too few images in the directory. So I tried it on the ‘test’ directory rather than the ‘smalltest’ directory, and got the same result again. Is this somehow a problem with the Keras version installed?

super excited to start this over the next few days!!

super excited to start this over the next few days!!