@jeremy, I just tried Git pull and conda env update but kernel is shutting down while trying to process ImageClassifierData.from_csv. I am working on Google landmark recognition competition where dataset is too large. I am using PaperSpace with 30 GB RAM and 16 GB GPU.

Hi. The PR is already merged so no need to make the same changes unless you are on an old version (in which case, try pulling the latest one!).

After the PR and after upgrading to 60 GB RAM I was able to run from_csv without crashing, but I think there are still some parts of the fastai library that are very memory intensive when run on big datasets with this many labels, so I had to do e.g. inference in parts (due to out of RAM crashes if a I recall correctly).

Thanks for the quick response William but I have already tried doing git pull and conda env update recently but it haven’t resolved the issue for me.

I’ll check with PaperSpace support if RAM can be increased.

Hi Ankit,

Atleast on 12 March Paperspace did not have more RAM and GPU available for the same machine. I went for AWS instead.

the dedicated GPU machines a;; have 30GBs of RAM, the P5000 has more GPU memory.

Unfortunately at this time we can’t upgrade RAM on those machines.

Hi William,

As suggested, i moved to p2.8xlarge AWS instance and did git pull but still Kernel is dying. For me the changes made by your PR is not working for Google landmark recognition competition.

You mentioned above that you did inference in parts due to RAM crash. Can you send me the code snippet of what code you have used to resolve this problem.

I am new to coding so any help is appreciated.

Hi Ankit,

Where is your code failing exactly, still in from_csv? Can you post the error trace from the kernel failure ? With p2.8xlarge (488 GB of RAM) it definitely shouldn’t fail to the same thing anymore, maybe the later versions of fast.ai have some new memory suck…?

I don’t have the inference code handy anymore :-/ But with 488 RAM, you shouldn’t be needing it.

I made changes in dataloader.py but the link for dataloaderiter.py does not open. Can you share the code snippet of changes done or link of the same. My kernel is dying for materialist kaggle challenge and until now i have found no solution of it.

@farlion What are the changes you have done to handle the Kernel dying problem. Please let me know any solution you have tried to resolve the issue.

Hi, the memory problem has been fixed by Jeremy, you no longer need to change anything.

Just run git pull and conda env update

I moved the repo a couple weeks ago, that’s why the link doesn’t work anymore.

Cheers, Johannes

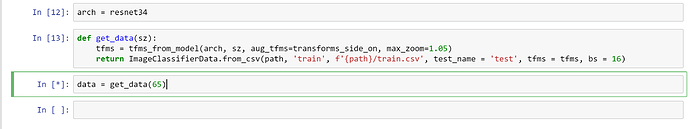

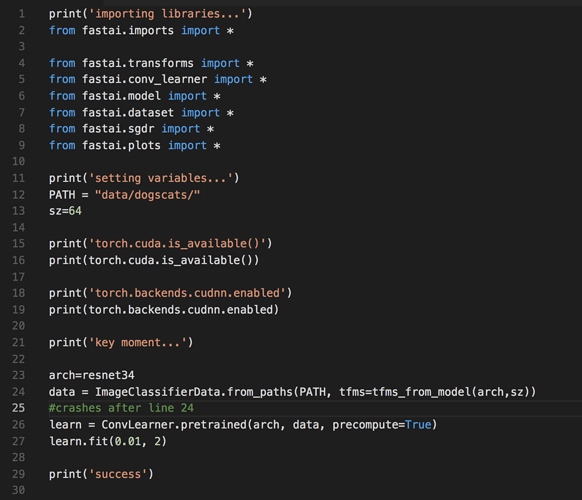

i just setup the new machine on AWS P2.xlarge instance and did - git pull and conda env update but still its not working out fr me. Using the below code. Any thoughts?

Hi all. I’ve been having this exact issue. When using the latest Jupyter Notebook, my kernel crashes on the line

data=ImageClassifierData.from_paths(PATH,tfms=tfms_from_model(model, sz))

note: The latest version is from_csv() but there isn’t a .csv file i can find anywhere! So instead, I’ve been using the older version.

I have the latest version of CentOS, Python, pytorch, and have done a git pull. When I run the code as just a python script:

I get the exception: illegal instruction (core dumped) - on the line mentioned above.

I’m thinking something with my machine might be the issue, could anyone confirm this if I gave more info?

I have the same issue, did you ever figure out what the problem was?