Hey,

You did a great job so far!

You can use scp

scp -r /path/to/foo user@your.server.example.com:/home/ubuntu/data/

where

-r Recursively copy entire directories

If you use a private key for connection you may want to add this option too

-i ~/.ssh/private_key_file

Here is how to split your data in test train valid Wiki: Lesson 1

And yes, you need to make the folders yourself, and then you specify in jupyter notebook the PATH to them.

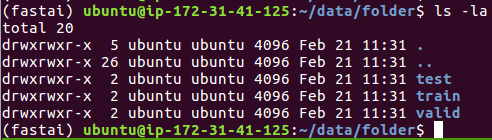

You should have a structure like this, where PATH = '/home/ubuntu/data/folder/'