@jeremy Was image captioning implemented in Part 2 of the course?

Not directly, although the section on DeVISE covered pretty much all you need to implement it. Would be a good class project.

@jeremy I took your advice and have started implementing Image captioning as a project but I am running into some problems. The loss is stuck around 5 and the accuracy around 0.07. I have tried changing the activations, learning rate, the optimizer, but it had no effect. Can anyone tell me how to resolve this situation?

Link for the code:- https://gist.github.com/yashk2810/d49f2e40b624a782552b32bfdb9cc6cc

Jeremy, I LOVED the section of the course covering DeVISE - such an elegant idea!

I suspect you use an LSTM or some sort of RNN to go from word2vec embedding of the image to a sentence but I’d appreciate if you could please give a couple sentences of elaboration on how to go from DeVISE to image captioning?

Thanks!

I’m so glad you liked it - it was fun to put together! Yes you’d use an RNN for the sentence embedding. See the later translation videos for some ideas that might be useful.

Makes sense, thank you!

In case anyone is interested:

I’ve started watching the Andrej Karpathy CS231n videos and came across his 2015 paper on generating image captions using an RNN on top of a CNN:

Also, the DeepMind team recently released their image captioning model written in tensorflow available here:

I have started implementing Image Captioning using Keras on the Flickr8k dataset. At 35.33% accuracy, I am getting decent results and I still have to train more. If you want, we can collaborate.

Code link:- https://github.com/yashk2810/Image-Captioning/blob/master/Image%20Captioning%20Bidirectional%20LSTM.ipynb

Very cool! Absolutely swarmed with work right now but will try remember to come back and take a look at this when I have some time to delve deeply into it

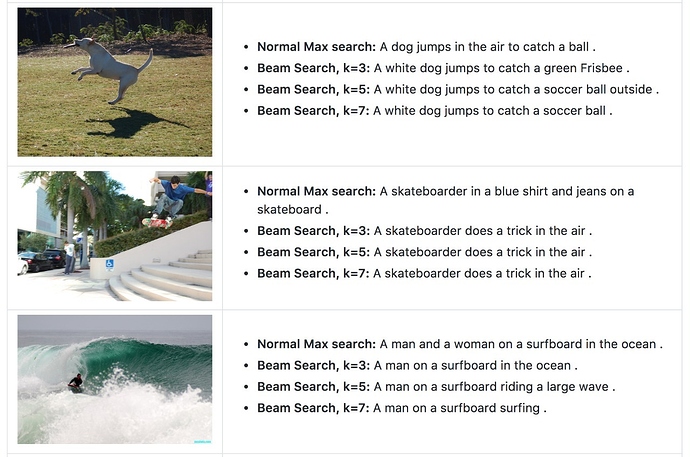

Image Captioning using InceptionV3 and Beam Search. https://github.com/yashk2810/Image-Captioning

https://yashk2810.github.io/Image-Captioning-using-InceptionV3-and-Beam-Search/