Unless I’m missing something obvious, currently once a notebook is committed, it becomes very complicated to commit changes to it. (for PRs and those with commit access). This is because we have all cells committed to the repository (and not just source cells).

I understand the reason for having outputs cells committed - a convenience while teaching, or even reading if you don’t necessarily want to run the notebook to see what it does. No objections here.

But there must be a better way for us to submit changes to aforementioned notebooks.

Currently my process is:

- make changes in the notebook to my satisfaction and see it working - save .ipynb from jupyter interface

- open an unmodifed notebook in an editor - find changes and manually copy them to that notebook (a big pain in the ass since you have to tweak json - ok when you just change a few words, a pain if you add/remove lines or cells) - save.

- load the modified notebook and test it working.

- since meanwhile jupyter will overwrite the notebook, force re-save of the version open in the editor

- shutdown kernel so that it won’t overwrite the notebook

- git diff (to validate again)

- git commit

Granted I could turn auto-saving off, but it doesn’t make things much easier.

Here are some things I have been considering to solve this problem.

1. nbdime

nbdime is a fantastic tool to work with notebooks. Currently I have it configured to ignore everything but source cells, so when I do nbdiff (or can configure git diff to invoke nbdiff), I get a cool diff of just the source cells, ignoring all the metadata, outputs and execution_counts.

Except this doesn’t help with commits. I can see what my source cell modifications diff, but when I commit all the other cells that got modified (and jupyter pretty much changes all of them every time you run the notebook) get committed too. Not good!

I suppose I could use nbmerge:

Merge two Jupyter notebooks “local” and “remote” with a common ancestor “base”.

but then I still can’t re-test the merged notebook, unless I make sure that jupyter doesn’t overwrite it while I’m testing it.

I like the autosave function and it’s useful all the time except during testing a change about to be committed.

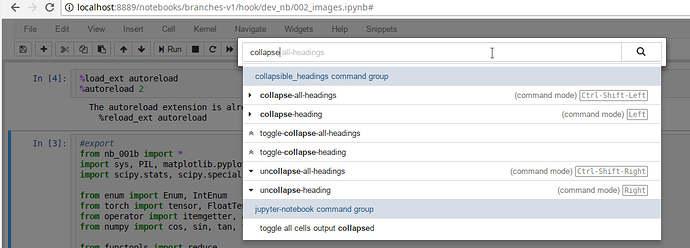

At the very least it’d be good to have a toggle button to turn Autosave On/Off on demand.

2. Adding cell-level granularity to jupyter notebook’s save function

Then I was thinking that perhaps jupyter’s save/autosave functionality could be modified to let the user configure what cells get saved.

So for example then we could have just source and output cells under git, and the rest to be ignored. This would already simplify things a bit.

But if there was away for me to tell jupyter notebook to overwrite only source cells then it’d make the PR/commit process so much simpler. You’d just modify the notebook in jupyter and commit it as is. As only source cells would ever change (or get added/deleted).

While I think this would be relatively easy to implement for changes in source cells, I have a feeling that it’d be very difficult to implement for adding/removing/moving source cells. As this would require some complicated tracking of the original and the final results, and it’d be a complex merge. So I’m not quite sure this can be automated.

3. Keeping just the source cells in git

And of course all these problems would go away if we were to only ever keep the source cells under git. (nbstripout on commit or something similar). Then one can just edit the notebook in jupyter and commit right away (with the same nbstripout setup).

As I mentioned at the beginning of this post I understand why outputs are under git, but it wastes a significant developing time and is error-prone. Perhaps we can think of a different solution for having the outputs and still keeping the source cells separate?

Thoughts?

And of course if you have an easier process to committing notebook changes (must include a pre-commit testing run) please kindly share.

Thank you.

p.s. these look like relevant to this subject matter links:

- https://stackoverflow.com/questions/18734739/using-ipython-notebooks-under-version-control

- nbstripout hooks: https://gist.github.com/minrk/6176788

and here is a fresh example of day-old commits which change all cells while improving comments:

Not reviewer-friendly at all, a big cause for conflicts and leading to much more complicated merge process

(and nothing personal against @lesscomfortable, this was just an example to illustrate the point).