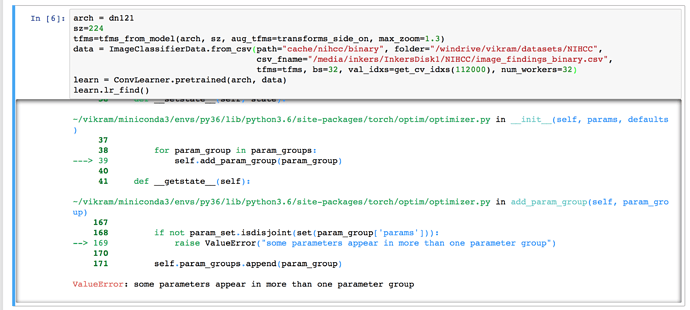

I am trying to use Densenet 121 pretrained model to fine-tune on my data. I am getting following error:

ValueError: some parameters appear in more than one parameter group

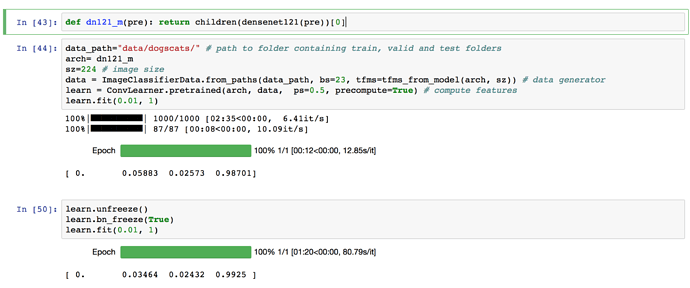

I am using dn121 defined in fastai.torch_imports. This is how dn121 is defined:

def dn121(pre): return children(densenet121(pre))[0]

dn121 is using torchvision's model densenet121. Does this error mean the problem is related to pytorch and not fastai?

Can anyone tell me what is this error and how to fix it? Thanks in advance.

EDIT:

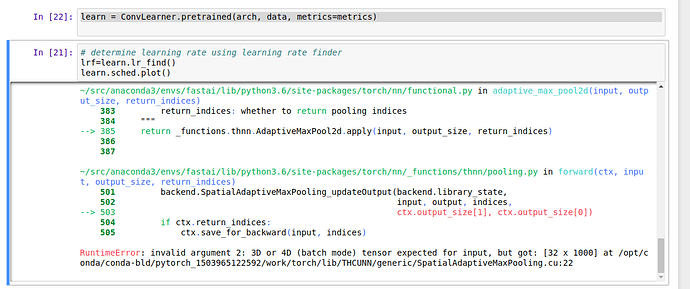

Complete Error Stack

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-14-4c49f1620610> in <module>()

6 tfms=tfms, bs=32, val_idxs=get_cv_idxs(112000), num_workers=32)

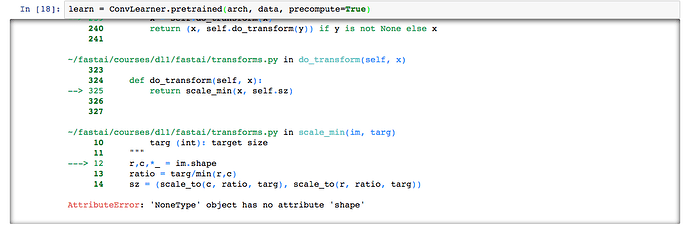

7 learn = ConvLearner.pretrained(arch, data)

----> 8 learn.lr_find()

~/vikram/fast_ai/fastai/courses/dl1/fastai/learner.py in lr_find(self, start_lr, end_lr, wds)

133 """

134 self.save('tmp')

--> 135 layer_opt = self.get_layer_opt(start_lr, wds)

136 self.sched = LR_Finder(layer_opt, len(self.data.trn_dl), end_lr)

137 self.fit_gen(self.model, self.data, layer_opt, 1)

~/vikram/fast_ai/fastai/courses/dl1/fastai/learner.py in get_layer_opt(self, lrs, wds)

92

93 def get_layer_opt(self, lrs, wds):

---> 94 return LayerOptimizer(self.opt_fn, self.get_layer_groups(), lrs, wds)

95

96 def fit(self, lrs, n_cycle, wds=None, **kwargs):

~/vikram/fast_ai/fastai/courses/dl1/fastai/layer_optimizer.py in __init__(self, opt_fn, layer_groups, lrs, wds)

15 if len(wds)==1: wds=wds*len(layer_groups)

16 self.layer_groups,self.lrs,self.wds = layer_groups,lrs,wds

---> 17 self.opt = opt_fn(self.opt_params())

18

19 def opt_params(self):

~/vikram/fast_ai/fastai/courses/dl1/fastai/core.py in <lambda>(*args, **kwargs)

63

64 def SGD_Momentum(momentum):

---> 65 return lambda *args, **kwargs: optim.SGD(*args, momentum=momentum, **kwargs)

66

67 def one_hot(a,c): return np.eye(c)[a]

~/vikram/miniconda3/envs/py36/lib/python3.6/site-packages/torch/optim/sgd.py in __init__(self, params, lr, momentum, dampening, weight_decay, nesterov)

54 if nesterov and (momentum <= 0 or dampening != 0):

55 raise ValueError("Nesterov momentum requires a momentum and zero dampening")

---> 56 super(SGD, self).__init__(params, defaults)

57

58 def __setstate__(self, state):

~/vikram/miniconda3/envs/py36/lib/python3.6/site-packages/torch/optim/optimizer.py in __init__(self, params, defaults)

37

38 for param_group in param_groups:

---> 39 self.add_param_group(param_group)

40

41 def __getstate__(self):

~/vikram/miniconda3/envs/py36/lib/python3.6/site-packages/torch/optim/optimizer.py in add_param_group(self, param_group)

167

168 if not param_set.isdisjoint(set(param_group['params'])):

--> 169 raise ValueError("some parameters appear in more than one parameter group")

170

171 self.param_groups.append(param_group)

ValueError: some parameters appear in more than one parameter group

It’s high on my priority list though.

It’s high on my priority list though.