Looks like the final answer wasn’t that complicated. Just throw a bunch of pretrained networks at the problem + ensembling.

So much training and training time though…I wonder, is this really applicable in real life applications?

Just seems like a grand ensemble of all possibilities, which wouldn’t be useful or applicable for real world applications?

You can try to create a single neural network that consolidates the information from your ensemble into a single simpler model.

Hi I am trying to run the ensemble notebook but I am running into a problem. When building the ensemble on the first pass when setting the weights at the top of train_dense_layer

def train_dense_layers(i, model):

conv_model, fc_layers, last_conv_idx = get_conv_model(model)

conv_shape = conv_model.output_shape[1:]

fc_model = Sequential(get_fc_layers(0.5, conv_shape))

for l1,l2 in zip(fc_model.layers, fc_layers):

weights = l2.get_weights()

l1.set_weights(weights) <------ Returns following error

the error

ValueError: You called ‘set_weights(weights)’ on layer "batchnormalization_xx with a weight list of length 0, but the layer was expecting 4 weights. Provided weights:[]…

Every time I retry the cell xx keeps increasing and when I look after the cell at the model summary the xx is always xx - 1.

Not sure I explained that very well.

So far I have discovered that dropout is causing a problem in this weight setting. All layers setting weights match until layer 4 is reached. In which we try to set the batchnormalization weights with dropout weights which of course there aren’t. Now I have to discover how to solve this.

If the layers have to match then I see the only way to get them to match is to add dropout to the l1 layers or remove dropout from the l2 layers. I tried the latter with comments which didn’t seem to work

I figured it out::

The get_fc_layers uses batch normalisation so calls to egg should use vgg16BN or remove the bn from get_fc_layers.

Thanks if you have had a similar issue

My experience was the ensemble results don’t match the position on the leader board. It is overfitting.

Took the Mnist ensemble and merged it to implement the dogscats-ensemble. The result; I moved up the leader board 150 places with respect to the original dogscacts-ensembler. (0.06668).

Changing the notebooks is quite challenging with out an xml type editor.

I want to change my latest to include Jeremy’s resnet50, but I am having problems fine tuning the model, i.e. to get dense 2 way output. I can remove (pop) the end layers or create with include_top=False but if I try to add a batch norm as per the ensemble three layers I am passing into batch norm parameters when they are not expected. Not sure what I am doing wrong

Joining the party a little late. The Dogs Vs Cats competition is closed, however I went ahead and submitted my file just to see where i was .

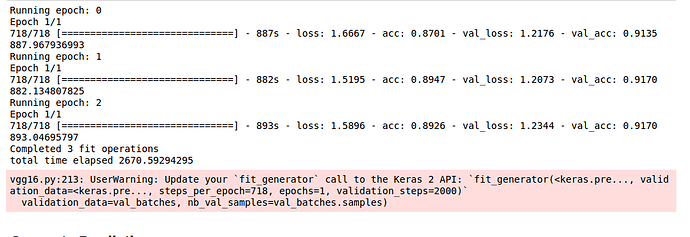

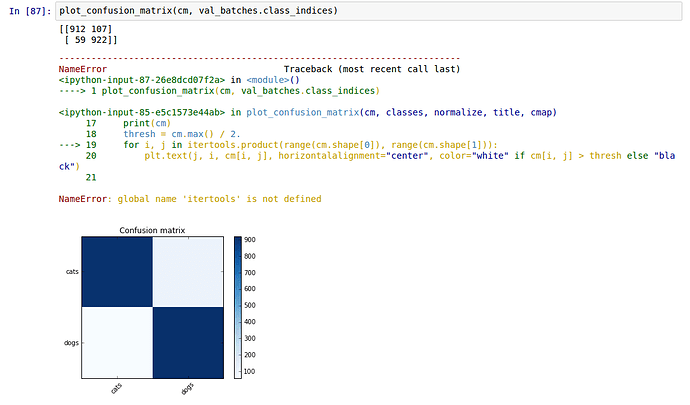

I was getting the validation accuracy of 0.9170 after 3 epochs following the notebook step by step. however, my logloss was pretty terrible. initially i did 0.025/0.975 and got logloss of 0.33. I then changed to 0.05/0.95 as in the notebook and it improved slightly.

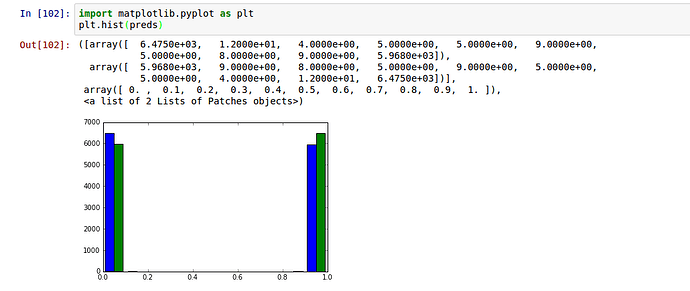

Dogs and Cats predictions before clipping

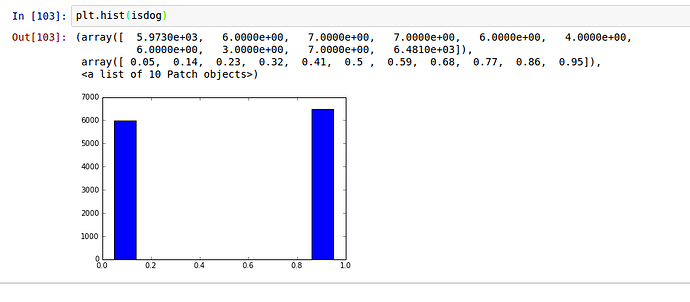

Is dog predictions after clipping 0.05/0.95

Any pointers would be hugely appreciated…

Thanks

There are only 36 images within isdog where the probability is between 0.2 and 0.8 and 14 images where isdog is between 0.4 and 0.6

What is the ‘_from’ and ‘to’ variables?