Dear All

I wonder why the accuracy is too low, i used random forest as Jeremy taught. Here the program

df_raw = pd.read_csv(f’blood-train.csv’, low_memory=False) # read csv

df_real = df_raw.drop(df_raw.columns[[0]], axis=1) # drop the first coloumns because patient id

X = df_real.iloc[:,0:-1] # data for train

Y = df_real[“Made Donation in March 2007”] # target

X_train, X_valid, Y_train, Y_valid = train_test_split(X, Y, test_size=0.2, random_state=0)

def rmse(x,y): return math.sqrt(((x-y)**2).mean())

def print_score(m):

res = [rmse(m.predict(X_train), Y_train), rmse(m.predict(X_valid), Y_valid),

m.score(X_train, Y_train), m.score(X_valid, Y_valid)]

if hasattr(m, ‘oob_score_’): res.append(m.oob_score_)

print(res)

def plot_fi(fi): return fi.plot(‘cols’, ‘imp’, ‘barh’, figsize=(12,7), legend=False)

but when i run

m = RandomForestRegressor(n_jobs=-1)

%time m.fit(X_train, Y_train)

print_score(m)

the result :

Wall time: 125 ms

[0.22663014312799548, 0.45166340510451114, 0.7044400607156713, 0.03173836585303136]

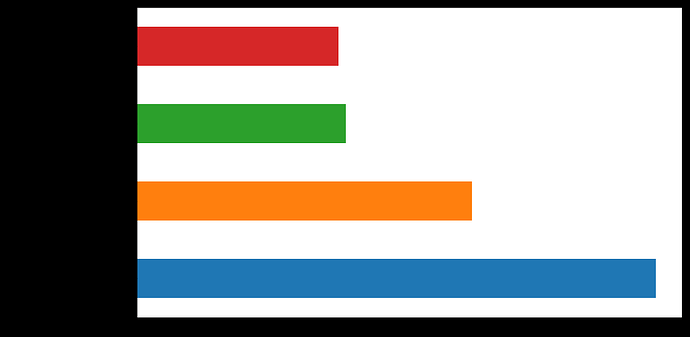

fi = rf_feat_importance(m, X_train) plot_fi(fi);

red color = Number of Donations

green color = Total Volume donated

Yellow color = Months since last donations

Blue Color = Months since first donations

X_train_new = X_train.drop(X_train.columns[[1,2]], axis=1) #drop 1,2 : number of donations

X_valid_new = X_valid.drop(X_valid.columns[[1,2]], axis=1) # and total volume donated

m = RandomForestRegressor(n_estimators=5, n_jobs=-1) with X_train_new and X_valid_new

the result :

[0.30293050804958604, 0.496225744911274, 0.47192422025946107, -0.16874966640774147]

m = RandomForestRegressor(n_estimators=1, max_depth=3, bootstrap=False, n_jobs=-1)

the result:

[0.3867465810985227, 0.4569195327227697, 0.13927743474533072, 0.009071399835752114]

m = RandomForestRegressor(n_estimators=1, bootstrap=False, n_jobs=-1)

the result :

[0.27112196782865156, 0.510299890084396, 0.5770007098922596, -0.2359868915512846]

m = RandomForestRegressor(n_estimators=10, max_depth=3,min_samples_leaf=10, max_features=0.6, n_jobs=-1, oob_score=True)

the result :

[0.3881006251352926, 0.4453275626764299, 0.13323990778728678, 0.05871298695045557, 0.05691055911963894]

m = RandomForestRegressor(n_jobs=-1)

the result:

[0.297515872670436, 0.48697007068137027, 0.490633353613878, -0.1255569023257912]

anyone knows why this happened ?

thx