I just finished this week an implementation of SSD with Keras.

I can send you the wiki page I made explaining how it is working in details (I need to review it one time to make sure there are no mistakes).

Thanks Ben!

Just found this as well, did a search bbox in the new repo and found this as well.

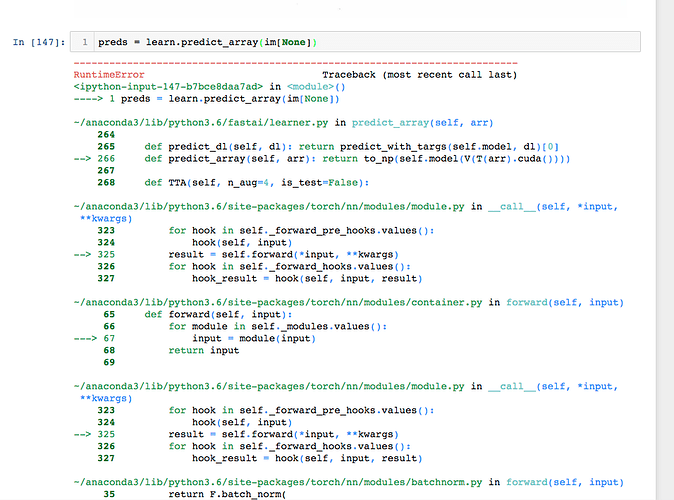

@jeremy a little bit stuck. How do I look at the bounding predicted bounded boxes after using learn.fit? Also, how do I pass in an individual image using this method.

Tried this method that worked on the classification task, but didn’t seem to work here.

trn_tfms, val_tfms = tfms_from_model(arch, sz)

img = Image.open(PATH+fn)

im = trn_tfms(np.array(img))

preds = learn.predict_array(im[None])

y = np.argmax(preds)

data.classes[y]

- Update

- The from the learn.predict() method are bounding box positions that come out.

- This worked for me as from previous answer on forum to get individual prediction.

io_img = io.imread(img_url)

im = self.trn_tfms(np.array(io_img)/255.0)

preds = to_np(self.learn.models.model(V(T(im[None]))))

- using it an api so mt look a little different.

Here is the document SSD-Description.pdf (2.4 MB).

Please note that it is not a tutorial on how to implement SSD but a summary of the information I collected while studying the model. I hope it can be still helpful.

Regarding the implementation, I encourage everyone to look at the following repo:

It is extremely useful to understand the details.

Hi there, I would like to ask, as I have implemented SSD too to detect custom object, but how should I crop the image out that detected by the box? Is there a way?

@chingjunehao: The output of the SSD detector is a tensor containing N_box “box tensors”. Each “box tensor” contains the data relative to one box generated by the model, the data being (your data order might be different) [x_center, y_center, width, height, x_center_variance, y_center_variance, width_variance, height_variance, class1_score, …, classN_score, x_center_offset, y_center_offset, width_offset, height_offset].

The scores and offsets are the parameters that SSD predicts and the other parameters are fixed.

So in order to crop your image, you need to follow the steps below:

- Have a decoder function to compute the predicted position of the boxes in pixels.

- Filter the boxes to keep only the ones with the highest score (non-max suppression)

- Finally use the positions of the remaining boxes to crop your image.

Interesting thread!

I’m using a combination of inception + ssd to train my own custom dataset. After weeks of painful annotations, I finally got to a good level of accuracy on the object categories.

Strangely though, I can see a consistent pattern in the bounding box predictions among all classes. It’s like they are all expanded to the right side alone to include extra space, yet a tight bound in the left (esp left -bottom). check out the images below… and I’m not exaggerating - but EVERY prediction comes out this way… so I’m pretty sure it has something to do with my data/ a possible bug.

Just wondering if anyone has faced a similar issue or has any ideas on why this might be happening? Any pointers would be great!

Yes, this implementation is amazing. Reading the documentation and the code for the ground truth encoding process helped my understanding a lot. It’s actually a lot better (more comprehensive, better documentation, all original models provided) than the one linked to further up in this thread:

Something like this you are looking for to save part of the image?

from PIL import Image

img = Image.open('saved_image.png')

print(img.size)

croppedIm = img.crop((490, 980, 1310, 1217))

croppedIm.save('crop.png')

(late answer)

Hello All

So after reading this I tried object localization on my own data containing single object per image without any class i.e. I’ve to predict only bounding boxes of object without classifying them as object are very different. Most of the objects are grocerry item on a white background. I tried adding a simple regressor head ahead resnet and mobilenet but I’m in vain as the results aren’t good. Also, some of my test images contain two adjecent objects like a pair of shoes whereas in my training data there’s only one shoe. So, this is one thing I’m not able to tackle. Can somebody help?